Building ViewShift: When Windows Lies to You About Your Monitors

I have three screens on my desk. A 34" ultrawide in the centre for work, a small BenQ tucked to the right for reference material, and a big TV on the left wall for gaming and media. Every time I switch between modes — coding, sim racing, watching something — I'm clicking through Windows display settings, dragging windows around, and pressing the TV remote. It takes two minutes and breaks flow every single time.

So I started building ViewShift.

The Idea

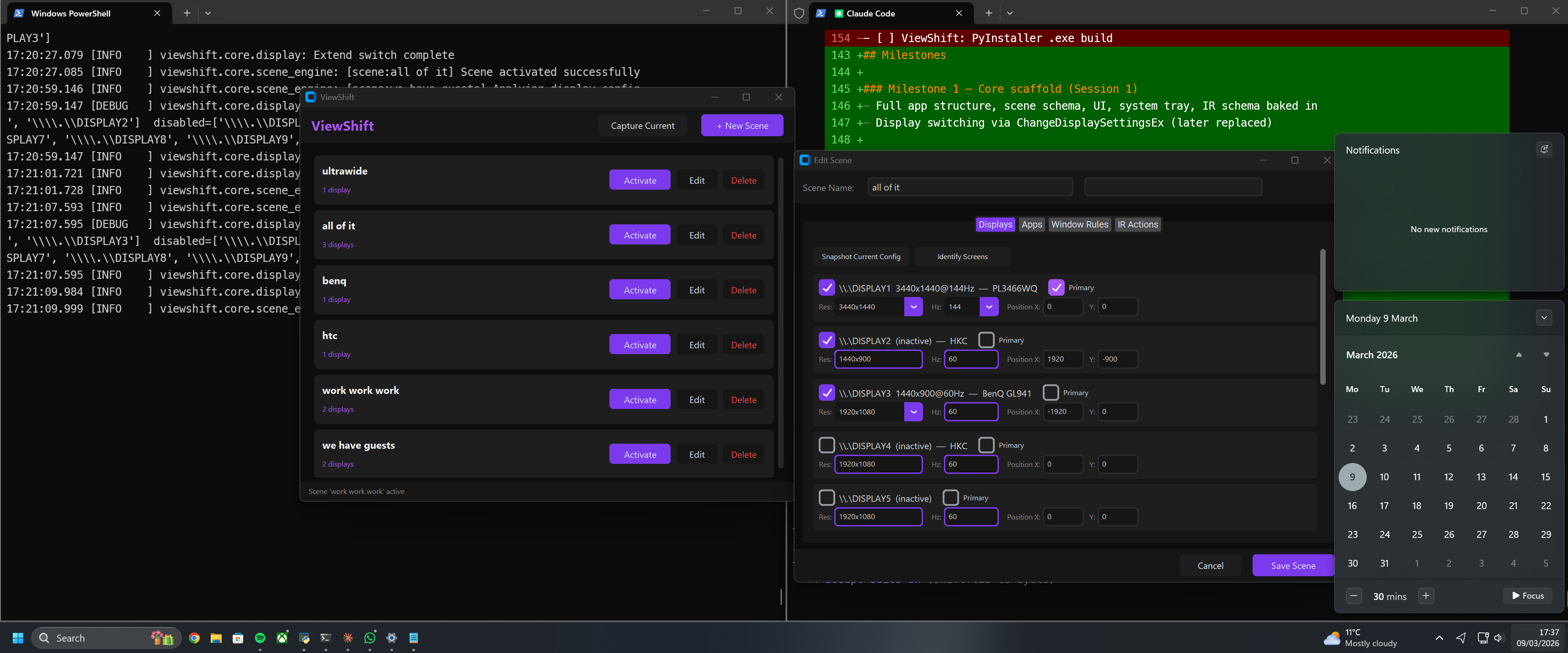

ViewShift is a display profile manager for Windows. You create named Scenes — each one defines which monitors are active, their arrangement, apps to launch, where to put already-running windows, and eventually IR commands to fire at a TV. You switch scenes from the system tray in one click.

Simple concept. The implementation turned out to be anything but.

The First Lie: ChangeDisplaySettingsEx Does Nothing

The obvious Windows API for switching displays is ChangeDisplaySettingsEx. Every Stack Overflow answer, every old forum post — "just call ChangeDisplaySettingsEx and you're done."

Except it isn't. On any modern system with an NVIDIA or AMD driver, ChangeDisplaySettingsEx returns DISP_CHANGE_SUCCESSFUL and then does absolutely nothing. The drivers intercept it. The displays don't move.

The modern API is SetDisplayConfig and QueryDisplayConfig, part of the Windows CCD (Connected Displays Configuration) system. It's documented, it works, and almost nobody uses it because the struct layouts are fiddly and the error codes are opaque.

The Second Lie: Your Struct Size Is Wrong

CCD uses a type called DISPLAYCONFIG_MODE_INFO. It contains a union — either a target mode or a source mode — and some header fields. In Python via ctypes, you need to declare this struct with the right size: 64 bytes exactly.

The union's largest member is DISPLAYCONFIG_VIDEO_SIGNAL_INFO, which is 48 bytes. So the union padding is 48. Not 64. Not 32. 48.

I originally used 64 bytes of padding, which made the struct 80 bytes. Windows was writing 64-byte structs into my buffer. Every mode after index 0 was being read from the wrong offset. The mode data looked plausible — just subtly wrong. I only caught it by printing raw uint32 values from the buffer and noticing that mode[1]'s infoType field contained 3440 (a resolution width) instead of 1 or 2.

# Wrong — struct is 80 bytes, Windows writes 64

("padding", ctypes.c_uint8 * 64)

# Correct — union = max(VIDEO_SIGNAL_INFO=48, SOURCE_MODE=20) = 48 bytes

("padding", ctypes.c_uint8 * 48)

While I was in there, I also found the mode type constants were swapped:

# Wrong

DISPLAYCONFIG_MODE_INFO_TYPE_TARGET = 1

DISPLAYCONFIG_MODE_INFO_TYPE_SOURCE = 2

# Correct (per Windows SDK)

DISPLAYCONFIG_MODE_INFO_TYPE_SOURCE = 1

DISPLAYCONFIG_MODE_INFO_TYPE_TARGET = 2

The Third Lie: SDC_TOPOLOGY_EXTEND Will Save You

Once a display goes inactive — say you switch to single-monitor mode — Windows removes it from the active topology. When you want to bring it back, the obvious approach is SDC_TOPOLOGY_EXTEND: "hey Windows, just extend all the displays." That flag exists. It sounds right.

On this NVIDIA system, it returns error 87 (ERROR_INVALID_PARAMETER). Every time. No explanation.

SDC_TOPOLOGY_SUPPLIED — "here are the paths, figure out the modes" — also returns 87.

The only flag combination that reliably works is SDC_USE_SUPPLIED_DISPLAY_CONFIG: you provide the complete path and mode data, Windows applies exactly what you give it.

So the solution is a config cache. Before switching to single-display mode, save the current full multi-display config — paths and modes — in memory. When you need to bring an inactive display back online, restore from cache.

def switch_to_single(gdi_name):

paths, modes = query_display_config(QDC_ONLY_ACTIVE_PATHS)

if len(paths) > 1:

_save_cache(paths, modes) # save before switching away

# ... switch to single display

The Fourth Lie: list() Is a Deep Copy

It isn't.

list(paths) creates a new list, but the ctypes struct objects inside it are the same objects. When the switch function later modifies path.sourceInfo.modeInfoIdx to remap mode indices, it was silently corrupting the cached data. The next restore attempt sent garbage to Windows and got error 87 back.

# Wrong — same struct objects, mutations corrupt the cache

_cache_paths = list(paths)

# Correct — copy each struct

_cache_paths = [copy.copy(p) for p in paths]

Surviving Restarts: Disk-Persisted Baseline

The cache only exists in memory. If the app starts while you're already in single-display mode — which is common, since that's where the last scene left things — there's nothing to restore from.

The fix: write the cache to disk as raw bytes whenever it's updated. On startup, if only one display is active, load the cache file. The struct layout is stable within a machine session (adapter LUIDs don't change without a hardware change), so the binary data is safe to persist.

~/.viewshift/display_cache.bin

The cache also never downgrades — if a 3-display config is saved, a later 2-display startup won't overwrite it.

To make this explicit for new users, there's a Save Baseline button. Get all your displays on, click it, and that config is locked in permanently. ViewShift can then restore any display from any starting state.

Stable Monitor Identity: EDID Names

Windows assigns display numbers — \\.\DISPLAY1, \\.\DISPLAY2, \\.\DISPLAY3 — and they're not stable. After a reboot or after switching display modes, the numbers can change. A scene that says "switch to \\.\DISPLAY2" might switch to the wrong monitor.

The CCD API provides a way out: DisplayConfigGetDeviceInfo returns the monitor's EDID model name — "PL3466WQ", "BenQ GL941", "HKC". These come from the monitor's firmware and don't change.

ViewShift scenes store both the GDI name and the EDID friendly name. The resolver checks whether they agree. If they don't — the GDI number points to a different monitor than the friendly name suggests — it trusts the friendly name and finds the right \\.\DISPLAYx for the current session.

Capture Current

The scene editor has a Snapshot button that fills in the current live display state. But there's a faster path: Capture Current on the main window. Get your displays exactly how you want them, click Capture, type a name, done. The scene is saved immediately with the correct EDID names and positions pulled straight from the CCD API.

One rule: always capture scenes with all displays active. If you capture in single-display mode, Windows renumbers the remaining displays and the scene data comes out wrong.

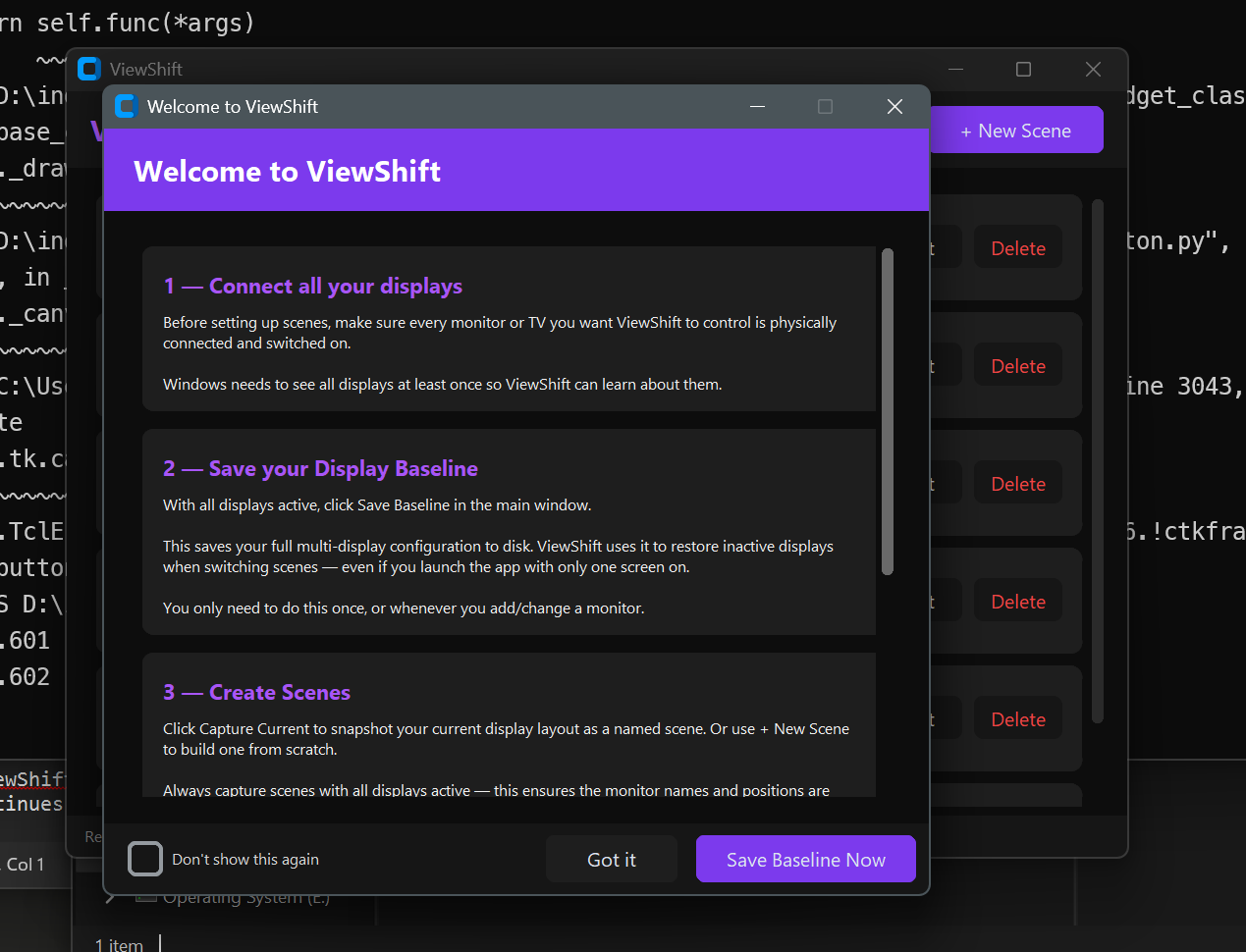

First-Run Setup Guide

There's one catch with the cache approach — it needs to see all your displays active at least once to know about them.

ViewShift handles this with a first-run guide. On first launch it walks you through the setup: connect everything, save a baseline, create scenes, use the tray. At the bottom there's a Save Baseline Now button that locks in your full display config to disk in one click.

After that it never needs to see all displays active at startup again. The baseline is always there.

The TV Problem — and What's Coming Next

Most of my monitors are just monitors. The TV is a different story. It doesn't support CEC — so there's no software way to turn it on or change its input over HDMI. You still need the remote.

The plan for this is IRNode: an ESP32 on the local network with an IR LED pointed at the TV. ViewShift fires an HTTP request at it when a scene activates; the ESP32 fires the right IR code; the TV turns on and switches to the right input.

ViewShift: POST http://192.168.0.50/command

Body: { "device": "tv", "command": "power_on" }

ESP32: fires 940nm IR pulse sequence

TV: does what it's told

No CEC. No proprietary protocols. An ESP32 costs £3-5. The IR receiver for learning codes from your existing remote is another £2.

The IR action schema is already baked into ViewShift scenes — each scene can carry a list of IR commands to fire on activation. IRNode is the next build. The firmware design is done; the hardware is on the bench. That'll be its own post.

Where It Is Now

Display switching is working reliably across a 3-monitor NVIDIA desktop — single display, full extend, and 2-of-3 subsets. The baseline config survives restarts. Scenes are identified by EDID name so they survive Windows renumbering displays on reboot. First-run setup guide walks new users through the whole thing.

Still to come: IRNode hardware, PyInstaller executable, and the license system before release.

ViewShift is being built as a commercial product — a proper tool for people who switch contexts at a desk all day. More updates as development continues.

COMMENTS

This is just a test